Analogue Video

Analogue VideoEach of our eyes have 6 to 7 million receptors that transfer what we see, via one million nerves, to the brain. Although it’s impossible for any technology to accommodate this quality, it can create some effective illusions, assisted by the quirks of human physiology and psychology.

A movie consists of a sequence of photographic images that are passed quickly before the eye, giving the impression of a moving image. As bitmap images, movies can consume a huge amount of memory and disk space, although this can be overcome by using lossy compression, usually tailored to the shortcomings of the human eye. Such processes normally divide the picture into fixed elements and moving elements, so that only information about the moving parts is conveyed.

An animation, on the other hand, usually consists of man-made images, often generated by a computer, but also formed into a sequence. Fortunately, such movies often contain vector images that use very little data space. Better still, the movement of the elements within an animation can be simply described in mathematical terms, requiring no extra information to be used for fixed elements.

The designer of a video system has to decide on what quality or resolution is required. This sets the size of the smallest component to be transmitted, usually known as a picture element or pixel. In digital video systems each pixel is square or rectangular, the entire picture being replicated by assembling these blocks together, both side by side and one above the other. In analogue video technology the picture is divided into lines, effectively of the same height as a pixel.

In standard definition (SD) video there are 525 or 625 lines available, although only around 500 or 600 are used for the picture itself. In high definition (HD) video, a much greater resolution is obtained by using between 1050 and 1250 lines, of which 720 or 1080 may be used for the image.

The proportions of a picture are described by its aspect ratio. For example, a picture that’s 4 inches wide and 3 inches high has an aspect ratio of 4:3, which remains unchanged should the image be enlarged or reduced in size. As it happens, this is the standard ratio used for SD video images. This and other standard ratios are shown in the following table.

| Format | AR | W/ | Notes |

|---|---|---|---|

| Photo | 5:7 | 0.71 | Not |

| Photo | 8:10 | 0.80 | Not |

| SD | 4:3 | 1.33 | Equivalent |

| Digital | 3:2 | 1.5 | Oblong |

| SD | 14:9 | 1.56 | Used |

| HD | 16:10 | 1.60 | Square |

| Wide | 16:9 | 1.78 | 'Letter |

| Cinema | 2.21:1 | 2.21 | 19.89:9 |

| Cinema | 2.35:1 | 2.35 | 'Letter |

• 1920 × 1200 pixels, as on an Apple 23-inch Cinema HD Display

* Viewable on 16:10 screen, but with loss of ends or black bars above and below

3:2 aspect ratio can actually consist of oblong pixels that squeeze the picture into a 4:3 ratio .14:9 (almost 3:2) to improve the appearance on a 4:3 screen, although unpleasant black bars appear at the top and bottom of the picture. This letter box presentation is objectionable to many viewers, the author included.Most video systems use red, green and blue (RGB) or component video (YUV) coding. RGB is the oldest and simplest method, although conveying images in this form isn’t always ideal.

The range of colours available with RGB coding is known as the RGB colour space. Similarly, the range of YUV colours is known as the YUV colour space. Although most colours can be represented by both systems, several colours fall outside the range covered by such codings. The values in the two different systems are related by the following equations:-

Y = (0.299 × R) + (0.587 × G)

U = B - Y

V = R - Y

The normalised range of Y is between 0 and 100% whilst U and V are in the range of -88% to +88%.

The value of V can be plotted against the value of U on a graph, with V on the vertical axis. The point where Y = 0% represents saturated white, grey or black whilst a 100% value on the V or U axes represents pure colours such as red, magenta, blue, cyan, green or yellow.

Analogue Video

Analogue VideoIn analogue video systems, as used prior to the arrival of digital technology, the image is broken up and reconstructed by scanning, which splits the picture into lines instead of pixels.

The electron beam in a traditional video camera creates a spot that moves across each line to extract the picture information. Having reached the right-hand end of a line, it moves quickly leftwards and down to the next line. A complete image is created by scanning from the top left-hand corner to the bottom right of the picture.

Traditionally, an analogue picture is created by another moving dot in a cathode ray tube (CRT), whose intensity is varied as the beam sweeps horizontally across the screen, moving slowly downwards after each line to create an image pattern known as a raster. Each complete image is known as a frame and the number of frames per second (frm/s or fps) is called the frame rate. The NTSC television system uses a rate of 30 Hz, whilst the PAL system runs at 25 Hz.

Fortunately, the human eye has persistence of vision, retaining an image on the retina for a short time after it actually disappears. CRT displays also have persistence, which allows systems to be designed with a frame rate that doesn’t produce any noticeable flicker.

Although analogue video doesn’t divide the image into true pixels, the height of each line can be considered to correspond to the height of a pixel. The equivalent ‘pixel width’ is set by the frequency response, also known as bandwidth or horizontal resolution, of the signal. On initial consideration, you might think that this resolution should equal one pixel. In practice, it can be much less, partly because of the illusory effect of the visible horizontal lines on the human eye.

Interlaced scanning, also known as interleaved scanning, is employed in traditional analogue television broadcasting. In this system, the ‘even’ lines of the image are created first, producing what is known as an even field. A scan is then made of the ‘odd’ lines, creating an odd field. Together, each pair of fields make up the entire picture, commonly known as a frame.

This means that the ‘even’ lines 20 and 22 are in one frame whilst the ‘odd’ lines 21 and 23 are in the other. Such a technique has the effect of doubling the subjective flicker rate, so that a system based on, for example, a frame rate of 25 frm/s actually appears to flicker at 50 Hz

A simpler form of scanning, known as progressive scanning, non-interlaced scanning or non-interleaved scanning, is used in computers and more advanced video systems. This scans all of the lines in a sequential order. Fortunately, this doesn’t suffer from some of the artifacts associated with interlaced scanning, such as wheels that appear to spin backwards.

Unfortunately, traditional interlaced images aren’t compatible with computer-based media, such as CD-ROM or the World Wide Web, none of which use television-based interlacing. Problems can also occur when feeding standard video material into some types of computer or video capture card, although modern hardware and software can often accommodate such signals.

Difficulties also occur in the opposite direction, when a non-interlaced image from a computer is fed into video equipment that requires an interlaced signal. Typically, this causes the picture to jitter up and down at the frame rate, a highly unpleasant effect. Fortunately, this can be overcome by using modern hardware that provides a convolved video output. In this type of signal, adjacent pairs of lines are smoothed to reduce flicker, but with a corresponding reduction in vertical resolution.

The video signal itself is interspersed by synchronisation pulses that keep the scanning system at the receiver in step with that used at the source. In a composite video signal a positive voltage is used for picture information, whilst the synchronisation pulses are negative, making it easy to separate the components at a receiver. The horizontal synchronisation pulse occurs at the end of each line whilst the vertical synchronisation pulse appears at the end of the last line in each field.

In reality, the vertical synchronisation ‘pulse’ consists of a train of pulses, each identical to the horizontal pulse. This rapidly repeating sequence is detected in the receiver by using a process known as integration. Such narrow pulses, effectively at a high frequency, avoid any problems that could be caused by limitations in the low-frequency performance of the communications channel.

Digital Video

Digital VideoDigital video material is normally based on analogue PAL or NTSC standards, but is often ‘stripped down’ to reduce the amount of data. Typically, a standard NTSC image is reduced to 320 × 240 pixels (half the normal resolution) with a frame rate of 15 frm/s (quarter of the interlaced speed). Inevitably, this compromises picture quality, although this isn’t noticeable when viewed on a small area of a computer screen. Unlike normal video signals, interlacing isn’t used.

The following formats are commonly used:-

| File | Notes |

|---|---|

| QuickTime | Apple |

| MPEG | Standard |

| DV | Digital |

| AVI | Audio |

| ASF | Active |

| VOB | Video |

More information about some of these formats appears in the following sections.

QuickTime Movies

QuickTime MoviesA QuickTime movie is created using Apple’s QuickTime technology, a system that allows the fast-moving data within a multimedia file to be handled by a relatively slow computer.

QuickTime employs compression to save disk space. Unfortunately, numerous different compression systems are used, even within a single file format. So your applications must also be able to understand the compression system used in each document as well as the file format.

The software used to compress or decompress a file is known as a coder-decoder or codec. Many of these codecs are built into QuickTime itself, while others can be provided as separate files.

thng, don’t modify the operating system, although they must be present at startup to be effective.Sometimes, when opening an odd file format, QuickTime Player shows a white movie window and a dialogue saying something like Required compressor can’t be found. If this happen, leave the window open and select Get Info from under the Movie menu. Then set the pop-up menus as shown below. Hopefully, the Data Format information will help you obtain a suitable codec for your file.

QuickTime accepts files created using the following video codecs:-

| Codec | Notes |

|---|---|

| Animation | Run |

| Apple | Bitmap |

| Cinepak | For |

| Component | High-quality |

| DV-NTSC | Digital |

| DV-PAL | Digital |

| DVCPRO-PAL | Professional |

| Graphics | Simple |

| H.261 | High-quality |

| H.263 | Improved |

| H.264/AVC | Advanced |

| Intel | Older |

| Intel | Older |

| Intel | Older |

| Microsoft | Run |

| Microsoft | Used |

| Motion | Broadcast-quality |

| Motion | Variation |

| None | Large |

| Photo-JPEG | Photographic |

| Planar | Simple |

| PNG | General |

| Sorenson | Powerful |

| Sorenson | Development |

| TGA | Used |

| TIFF | Used |

| Video | Optimised |

* Also available for file export

• Real-time coding not supported in QuickTime 5

† Mac OS X 10.4 or higher only

Specific information on some of the standard codecs is provided below.

This codec is suitable for cartoons or similar graphics that contain solid blocks of colour.

These codecs correspond to the NTSC and PAL coding systems used in MiniDV and DVCam recording equipment. The process is similar to standard MPEG coding (see below), except that 4:1:1 colour sub-sampling is used for NTSC video and 4:2:0 is used for PAL.

This professional DV codec is designed for use with matching PAL equipment. There are actually two standards: DVC Pro 50, which is based on a data rate of 50 Mbit/s and 4:2:2 colour sub-sampling, and DVC Pro 25, running at 25 Mbit/s and employing the more common 4:1:1 colour system.

This is a rather outdated codec, although it can give reasonable results on a powerful computer. It compresses files by up to 10:1, ensures acceptable quality and colour reproduction and is particularly good for high frame rates. However, the compression process can take an enormous amount of time. In addition, the results are often unsatisfactory with data rates of less than 250 KB/s.

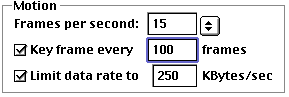

With this codec, the options in the Motion pane of the dialogue are normally set as shown below:-

Each key frame contains a complete image that’s created independently of any preceding frames, while the frames between the key frames are generated from earlier frames using inter-frame compression, a process that can reduce the size of a movie by half. For a small file, simply choose a low key frame rate by entering a large number in the dialogue. Unfortunately, very low rates can cause the movie to jump as you shuffle through it.

This codec is suitable for cartoons or similar graphics that contain solid blocks of colour. Files are half the size of those created with the Animation codec, but without any loss of quality.

Suitable for movies that don’t contain too much movement and where fast playback is required at the recipient’s machine. This codec isn’t ideal for transferring data via a modem.

An improved version of H.261, giving better quality and a faster coding speed, although demanding more from the recipient’s computer. This system is used for video conferencing via a modem.

Also known as Advanced Video Coding (AVC), the H.264 standard, which accommodates all kinds of video data, from images used with iChat to high definition (HD) television pictures, is likely to replace the older MPEG-2 file format.

This form of compression, also known as M-JPEG, uses a series of JPEG images to create the movie. Unlike other systems, every frame is treated as a key frame, with control of odd and even frames. As a consequence, this codec can create material of broadcast quality, assuming that you’ve selected Best on the Quality slider. Compression of 2.2:1 is used for general-purpose applications.

This codec provides good general-purpose compression for both graphical and dithered images, and includes support for an alpha channel.

This codec produces excellent quality at low data rates and is particularly suitable for movies with limited movement. Unlike Cinepak, which needs a data rate of 200 KB/s for a 320 × 240 pixel movie, Sorenson Video works well at between 50 and 100 KB/s. In fact, the use of a higher rate can overload the recipient’s computer, causing picture frames to skip.

Unfortunately, Sorenson Video decoding demands a lot of processing power. For example, a typical movie of 320 × 240 pixels at 15 frm/s is acceptable to most modern computers, but a full-motion 640 × 480 movie at 30 frm/s is simply too much for some machines.

320 × 240 image can be expanded on the screen with good results.640 × 480 movie, running at 30 frm/s, can be converted to give an aspect ratio of 16:9 and a frame rate of 24 frm/s. The result uses half the data and looks better than the original.For Internet use, you should initially set the Sorenson Video dialogue to look something like this:-

The recommended data rates for different kinds of modem are given in the following table:-

| Modem | Data |

|---|---|

| Dial-up | 2.5 |

| Dial-up | 5 |

| ISDN | 12 |

| T1 | 20 |

To create a small movie on CD-ROM you should use an image of 320 × 240 pixels, or 240 × 180 if you want to accommodate an older drive. The Sorenson Video dialogue should look like this:-

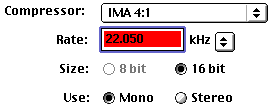

The matching sound track is commonly set up as follows:-

This produces larger files than Cinepak, although the compression process is usually very fast. As with Cinepak, you should use the lowest possible key frame rate to minimise file size.

MPEG Movies

MPEG MoviesThe Motion Picture Experts Group (MPEG), a part of the International Standards Organisation (ISO), has developed a range of standards for compressing movies and sound. The various MPEG files that they have devised can be played on all modern computers.

Under normal circumstances, a reasonable-quality video signal demands a transfer rate of 22 MB/s or more. MPEG compression, however, can reduce this to 200 KB/s or less.

The following variations of MPEG files can be encountered:-

This format provides a quarter-size image of 352 × 240 pixels for a NTSC image or 352 × 288 for the PAL system, but at the full broadcast frame rate of 30 or 25 frm/s. This kind of MPEG file is used to create Video CD (VCD) recordings, using a data rate of around 1.1 Mbit/s, and is sometimes used for still images on a CD-Video or for compressing audio material.

Note that the horizontal pixel count doesn’t match a quarter size image: you might have expected sizes of 320 × 240 for NTSC and 384 × 288 for PAL, corresponding to half of 640 × 480 and 768 × 576 respectively. In fact, MPEG-1 doesn’t use square pixels, as found on computer screens, but employs narrow rectangular pixels with proportions of 1.095:1.

This gives a full-size picture, also known as full-motion video (FMV), and at the standard frame rates of 30 or 25 frm/s. This kind of file is used for both DVD-Video and digital video broadcasting (DVB) systems. Typically, an MPEG-2 data stream runs at 5.7 Mbit/s.

MPEG-2 accommodates different aspect ratios, stretching the pixels to match. The ratios include the traditional 4:3 television format, 16:9, for wide screen television and 2.21:1, as used in Cinemascope films. A 1:1 ratio is also available for multimedia presentations on a computer.

Irrespective of ratio, 720 × 480 pixels are used for NTSC and 720 × 576 for PAL, the same as Digital Video (DV). On a computer, which always has square pixels, a 16:9 picture usually appears in a letter box format, so that on a 640 × 480 screen only 640 × 360 pixels are used.

Both MPEG-1 and MPEG-2 files can also convey a high-quality soundtrack, provided by another standard known as MPEG Layer-2 Audio. This shouldn’t be confused with MPEG Layer-3 Audio, also known as the MP3 format, which is used for sending audio over the Internet.

Both Layer-2 and Layer-3 use psychoacoustic coding techniques, exploiting the masking effect of one sound on another. Data rates of 192 kbit/s (comparable to CD quality), 128, 96 or 64 kbit/s can be used. MPEG files can also contain sound samples in the Wave format, as used in Windows.

This emerging standard is based on the QuickTime file format, providing real-time video streaming and audio streaming, as used on the Internet. At the time of writing, Ericsson intend to use an extended version of MPEG-4, known as 3GPP, for streaming such material to mobile phones.

The basic MPEG encoding process compresses each picture frame in a similar way to a JPEG, and then compresses the difference between the successive frames.This avoids to need to record too many complete frames. It does this by separating any moving foreground objects from the stationary background material. Only the motion of foreground objects is then recorded. This is rather more advanced than the simple frame difference compression process employed in QuickTime.

MPEG movies use three types of frames:-

| Type | Details |

|---|---|

| I | Intra |

| P | Compressed |

| B | Compressed |

The full MPEG process uses inter-frame compression to produce the smallest possible file. In order to know the contents of future frames the data is stored in a different order to that in which it’s displayed. This process requires the use of a buffer when viewing is in progress.

Officially, MPEGs should use one of eight frame rates, the lowest at 24 frm/s. In practice, even lower rates of 16 or 12 frm/s are used, although the results can be awful. Files that use such non-standard rates often have the frame rate value, as stored within the document, set to an irrelevant value.

Digital Video (DV)

Digital Video (DV)DV is used for high-quality, employing 720 × 480 pixels for NTSC video and 720 × 576 pixels for PAL, whatever the aspect ratio. Although the height in pixels is the same as the number of lines used for the actual picture in the analogue standard, the width appears to give an aspect ratio of 3:2. In fact, oblong pixels are normally employed. thereby ‘squeezing’ the image into a standard 4:3 aspect.

720 × 576 pixels to give an aspect ratio of 16:9, employing oblong pixels with proportions of 1:1.422.486 pixels.Unfortunately, consumer products, analogue television, CD-ROM and the Web require images with square pixels. Special software, such as Media Cleaner Pro, must be used to provide the conversion. This particular application can also convert a DV image into standard PAL or NTSC format without introducing the picture distortion created by pixels of the wrong shape. The standard borders found in DV images can also be removed during such a conversion.

The following DV formats are in common use:-

| Format | Mbit/s | Notes | |

|---|---|---|---|

| DV/ | DV | 25 | Standard |

| DV | DV | 50 | - |

| DVC | DV- | 100 | High |

Video streaming lets you watch a movie over the Internet in real time, rather than having to download it and view it later. This kind of mechanism is essential for sending television broadcasts over the Internet. Unfortunately for most of us, the available bandwidth on the Internet is very limited, requiring the use of advanced streaming software.

QuickTime accommodates both Real-time Transport Protocol (RTP) and Real-Time Streaming Protocol (RTSP), which are used together for RTSP streaming. However, until the arrival of QuickTime 6, there’s no easy way to handle MPEG-4 files, the ideal streaming format.

Multimedia material can be sent via RTSP in RealVideo format, also known as RealMedia or RealG2, and viewed in RealPlayer (RealNetworks). Microsoft’s Windows Media system uses Active Streaming Format (ASF) files for video streaming, although the older Video for Windows (Vfw) system uses Audio Video Interleave (AVI) for movies. Alternatively, you can use Digital Video (DV), MPEG-1 or QuickTime movie and QuickTime codecs.

QuickTime files can streamed, although only if you have a QuickTime Streaming Server. A pointer file on your HTTP server, more commonly known as a Web server, should link a hinted movie file, stored in the default directory of the Streaming Server, to your Web page.

You can use a modern version of QuickTime Player to create suitable movie files. After selecting Export you’ll need to select Movie to QuickTime Movie and a streaming rate under the Use menu. For a normal modem connection you should choose one of the alternatives shown in the Streaming 20 kbps section. For further choices you should click on Options, which presents you with a window showing alternatives for Video, Sound and Prepare for Internet Streaming.

Modern versions of the QuickTime plug-in allow the recipient to use an alternative movies suited to their connection speed. Your site should include a reference movie, with a name such a ref.mov, pointing to other files designed for a particular kind of link, such as 28K.mov, 56K.mov and ISDN.mov, intended for a 28 kbit/s modem, 56 kbit/s modem or ISDN link respectively. Also, a flattened movie, with a name such as movie.mov, should be sent to ensure that everyone can see it. Special software, such as MakeRefMovie (Apple), can be used to create such documents.

Video streaming via a standard modem is seriously limited. For example, a 28.8 kbit/s modem can only handle a frame rate of 2 to 3 frm/s with a picture size of 128 × 96 or 160 × 120 pixels. Where there’s little actual picture action or where a 56 kbit/s modem is used, you use 4 to 6 frm/s with a size of 176 × 144 or 192 × 144 pixels. The actual data rate for a 28.8 kbit/s modem shouldn’t exceed 22 kbit/s, corresponding to 12 or 14 kbit/s for the movie plus another 8 kbit/s for sound.

©Ray White 2004.